|

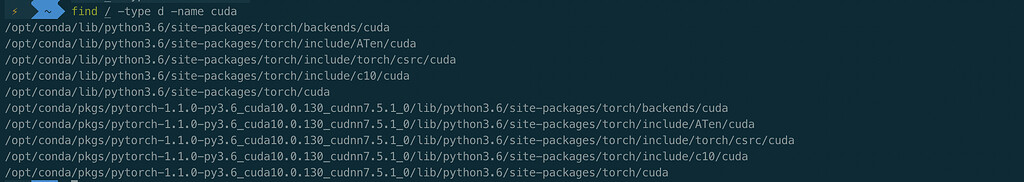

Then we show an example of how to run a container with Podman so that the GPUs are accessible inside the container. Once complete, you should see a series of outputs that end in done.:Ĭongratulations! You should have a working installation of CUDA by now. We start by setting up the host with the necessary NVIDIA drivers and CUDA software, a container runtime hook (nvidia-container-toolkit) and a custom SELinux policy. Sudo mv cuda-wsl-ubuntu.pin /etc/apt/preferences.d/cuda-repository-pin-600 Then setup the appropriate package for Ubuntu WSL: It adds the cuda install location as CUDAPATH to GITHUBENV so you can access the CUDA install location in subsequent steps.CUDAPATH/bin is added to GITHUBPATH so you can use commands such as nvcc directly in subsequent steps. As an update to Viacheslav Shalamovs answer, the nvidia-container-runtime package is now part of the nvidia-container-toolkit which can also be installed with: sudo apt install nvidia-cuda-toolkit and then follow the same instruction above to set nvidia as default runtime. Also notice that attempting to install the CUDA toolkit packages straight from the Ubuntu repository (“cuda”, “cuda-11-0”, or “cuda-drivers”) will attempt to install the Linux NVIDIA graphics driver, which is not what you want on WSL 2. This action installs the NVIDIA® CUDA® Toolkit on the system. docker run -rm nvidia/cuda:11.0-devel-ubuntu20. Be aware that older versions of CUDA (<=10) don’t support WSL 2. Surely I can pull an image with cuda toolkit from the Dockerhub. The following commands will install the WSL-specific CUDA toolkit version 11.6 on Ubuntu 22.04 AMD64 architecture. On WSL 2, the CUDA driver used is part of the Windows driver installed on the system, and, therefore, care must be taken not to install this Linux driver as previously mentioned. so using a regular docker I came to a conclusion that 2 different CUDA versions aren't compatible for the following run concept: use the local GPU with CUDA 11 for example with the docker environment with lower OS version and lower CUDA version, because the container must approach the local. Set up the stable repository for the NVIDIA Container Toolkit by running the following. sh sudo service docker start If you installed the Docker engine directly then install the NVIDIA Container Toolkit following the steps below. Cuda libraries on the host are found via the ld.so.cache that is bound into a. Download and install the latest driver for your NVIDIA GPU.

Normally, CUDA toolkit for Linux will have the device driver for the GPU packaged with it. nvidia-docker2 general question about capabilities. Through Singularity it is possible to run parallel applications on.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed